When Hurricane Milton made landfall in late 2024, parametric policyholders received payouts within days. Traditional claimants? Many waited months. That gap is not a quirk of bureaucracy. It is a structural failure, and it is widening with every hurricane season.

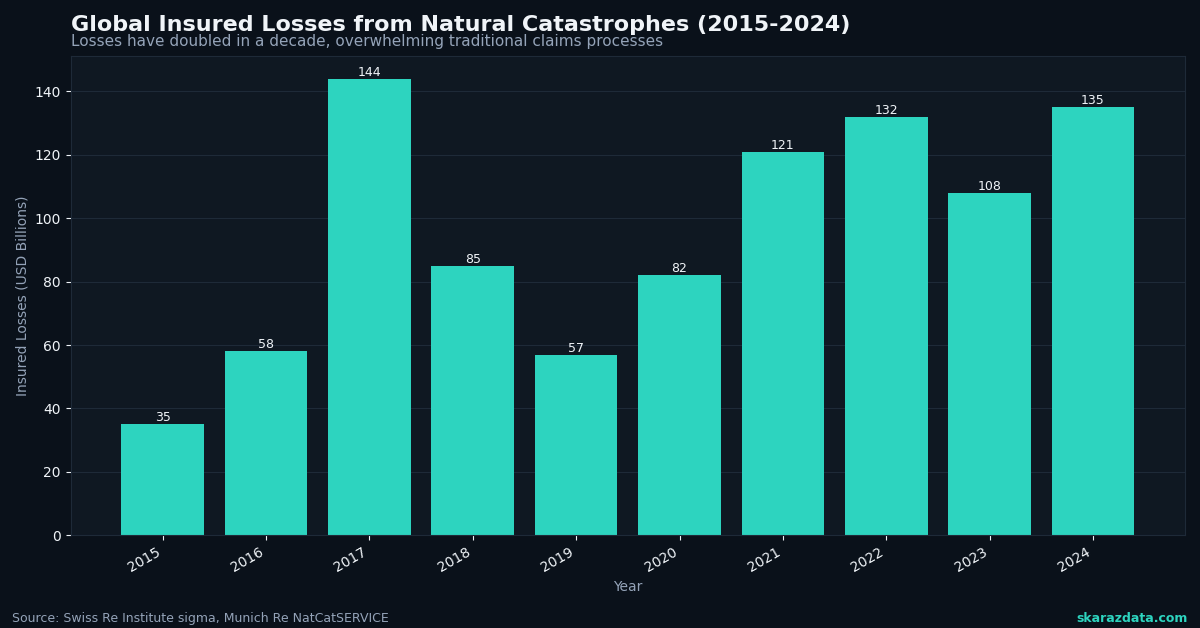

The insurance industry likes to talk about “closing the protection gap.” But here is the uncomfortable truth: traditional indemnity insurance was designed for a world where disasters were rare, localized, and measurable after the fact. None of those assumptions hold anymore. Global insured losses from natural catastrophes exceeded $130 billion in 2024, and the trend line points in one direction. The old model of sending adjusters to inspect damage, negotiate claims, and process paperwork over weeks or months simply does not scale when entire regions are hit simultaneously.

Parametric insurance offers a fundamentally different architecture. And the key word there is architecture, not just product.

How Parametric Actually Works (And Why Data Is the Product)

A parametric policy pays out when a predefined trigger is met: wind speed exceeds 130 mph at a specific weather station, rainfall crosses 200mm in 48 hours, or satellite-derived flood extent covers a defined area. No adjuster visits. No claim negotiation. The data source is the arbiter.

This sounds elegant, and it is. But it also shifts the entire risk management problem from post-event assessment to pre-event data engineering. The quality of the product depends entirely on:

- Trigger design: choosing the right variable, threshold, and geographic resolution

- Data source reliability: ensuring the index (weather station, satellite, IoT sensor) is accurate, tamper-resistant, and consistently available

- Basis risk management: minimizing the gap between what the index measures and what the policyholder actually experiences

- Historical calibration: building actuarial models from decades of geospatial and meteorological records

Arbol, the insurtech profiled by McKinsey, has built its entire business on this premise. Their platform ingests over 1 billion data points daily from weather stations, satellite imagery, and IoT networks to price and trigger parametric contracts. The insurance product is, in practice, a data pipeline with a payment mechanism attached.

The Scalability Problem Traditional Insurance Cannot Solve

Consider what happens when a Category 4 hurricane hits a metro area of 2 million people. Under the traditional model, insurers need to:

- Deploy hundreds of adjusters to inspect individual properties

- Process thousands of claims, each requiring documentation and negotiation

- Handle disputes, appeals, and litigation

- Manage reinsurance recoveries across multiple layers

This process can take 6 to 18 months for complex events. During that time, communities cannot rebuild, businesses close permanently, and economic damage compounds far beyond the initial physical loss.

Fremont, California recently demonstrated an alternative. The city purchased citywide parametric flood coverage through Floodbase, triggered by satellite-detected flood extent. If flooding hits, the city receives funds within weeks to deploy toward recovery, without waiting for federal disaster declarations or individual claim processing.

This is not a marginal improvement. It is a different category of response. And it is only possible because satellite-derived flood mapping has reached the resolution and reliability needed to serve as a contractual trigger.

The Data Engineering Challenge Behind the Curtain

Parametric insurance sounds like it solves everything, but the technical challenges are significant. Every trigger design decision involves tradeoffs that require deep domain expertise in both climate science and data engineering:

- Spatial resolution: a weather station 20km from the insured location may not capture localized convective storms. Gridded reanalysis products (ERA5, CHIRPS) offer better coverage but introduce interpolation uncertainty.

- Temporal granularity: daily aggregates can miss short-duration extreme events. Hourly or sub-hourly data improves trigger accuracy but increases storage and processing costs by orders of magnitude.

- Historical depth: actuarial pricing requires 30 to 50 years of consistent records. Satellite datasets like Landsat go back to 1972, but sensor changes across missions create discontinuities that need careful harmonization.

- Real-time delivery: triggers must fire reliably within hours of an event. This requires robust data pipelines with monitoring, failover, and validation at every stage.

The UNDP’s recent partnership with Swiss insurance operators highlights another dimension: in emerging markets, ground-truth data is sparse. Building parametric products for smallholder farmers in Sub-Saharan Africa or Southeast Asia requires creative combinations of satellite remote sensing, mobile weather stations, and crop modeling to construct reliable indices where traditional data infrastructure does not exist.

Cat Bonds and the Capital Markets Connection

Parametric structures are also reshaping how disaster risk flows into capital markets. Catastrophe bonds, which transfer insurance risk to investors, increasingly use parametric triggers rather than indemnity-based loss calculations. The Climate and Community Institute notes that cat bond issuance has grown steadily, with parametric structures preferred for their transparency and speed of settlement.

For investors, parametric triggers eliminate moral hazard and reduce information asymmetry. For issuers, they simplify the claims process and reduce basis risk disputes. The result is a more liquid, more efficient market for disaster risk transfer.

But this efficiency depends entirely on the credibility of the underlying data. A parametric cat bond triggered by NOAA wind speed measurements carries different risk characteristics than one triggered by a proprietary satellite-derived index. Data provenance, validation methodology, and historical back-testing are not just technical details. They are the foundation of contract enforceability.

What Comes Next

The trajectory is clear. As climate volatility increases, traditional loss adjustment becomes slower, more expensive, and less reliable. Parametric insurance scales because it replaces human inspection with data infrastructure. But that infrastructure must be built correctly: robust pipelines, validated indices, transparent methodologies, and continuous monitoring.

The winners in this space will not be the companies with the best marketing or the slickest app. They will be the ones with the most reliable data engineering, the deepest climate science expertise, and the ability to translate geospatial datasets into actuarially sound trigger designs.

At Skaraz Data, we work at exactly this intersection: building the climate data pipelines, geospatial processing workflows, and analytical infrastructure that make parametric products possible. If your organization is exploring parametric solutions or needs to strengthen the data foundation behind your climate risk models, that is a conversation worth having.